How to Set Up JetBrains Databao for Data Analysis

Last updated Apr 2, 2026

What Databao Does and Why It Matters

Databao is a data analysis tool from JetBrains that lets you ask questions about your data in plain English and receive answers as text, interactive charts, or tables. It launched in February 2026 and immediately gained attention after ranking first on the Spider 2.0 DBT benchmark, a rigorous test of how well AI agents can read a real dbt repository, understand broken models, implement fixes, and validate results by running actual code.

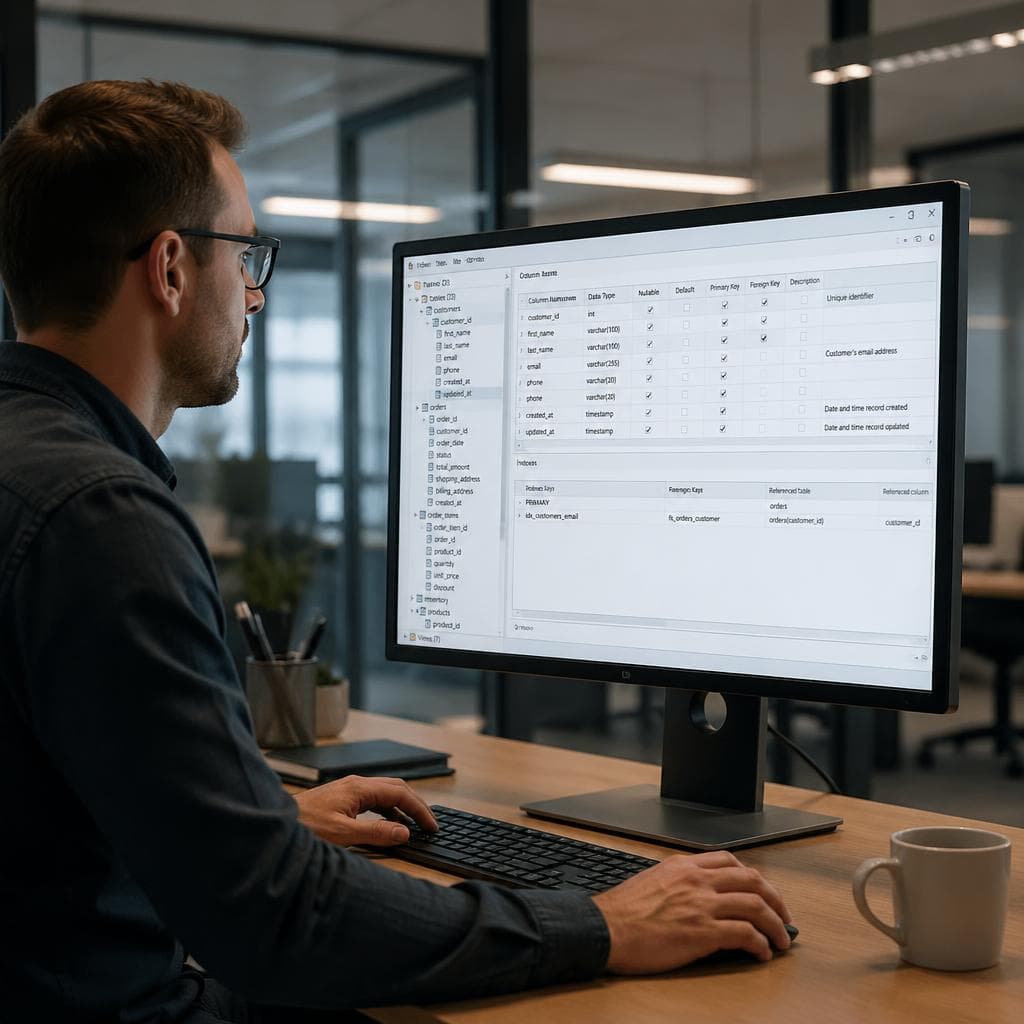

The tool has two core components. The Context Engine is a CLI that extracts schema and metadata from your databases, BI tools, and documentation to build a governed semantic layer. The Data Agent is an open-source Python SDK that uses that context to generate production-quality SQL and visualizations. Together, they solve a persistent problem in data work: getting reliable, repeatable answers from AI without manually copying schemas into prompts every time.

Prerequisites

Before starting, make sure you have the following ready:

Python 3.10 or higher installed on your machine. You can verify by running python --version in your terminal.

A database you want to query. Databao supports PostgreSQL, MySQL, Snowflake, BigQuery, DuckDB, SQLite, and Pandas DataFrames. For this guide, we will use PostgreSQL as the example, but the pattern is the same for any supported source.

An API key for your preferred LLM. Databao works with OpenAI models (GPT-4o, GPT-4o-mini), Anthropic models (Claude), and local models through Ollama. You only need one.

Step 1: Install Databao

Open your terminal and install the Databao agent package:

pip install databao-agent

This installs both the Python SDK and the CLI tool. If you want to use the Context Engine separately for managing semantic layers across your team, you can also install it standalone:

pip install databao-context-engine

For most individual users starting out, the agent package is all you need.

Step 2: Configure Your LLM

Databao needs an LLM to translate your natural language questions into SQL. Set your API key as an environment variable based on your provider.

For OpenAI:

export OPENAI_API_KEY="your-key-here"

For Anthropic:

export ANTHROPIC_API_KEY="your-key-here"

For local models through Ollama, no API key is needed. Just make sure Ollama is running and specify the model name in the format ollama:model-name when configuring the agent.

Step 3: Connect Your Database

Create a Python script to connect Databao to your data source. The agent uses SQLAlchemy under the hood, so if you have worked with SQLAlchemy before, the connection pattern will be familiar.

from sqlalchemy import create_engine

import databao.agent as bao

# Create a database connection

engine = create_engine("postgresql://user:password@localhost:5432/mydb")

# Configure the LLM

llm_config = bao.LLMConfig(name="gpt-4o-mini", temperature=0)

# Create a domain and register your database

domain = bao.domain()

domain.add_db(engine)

# Initialize the agent

agent = bao.agent(domain, name="my-analysis", llm_config=llm_config)

For DuckDB, pass a DuckDBPyConnection object instead of a SQLAlchemy engine:

import duckdb

conn = duckdb.connect("my_data.duckdb")

domain.add_db(conn)

For Pandas DataFrames, you can register them directly without any database connection at all:

import pandas as pd

df = pd.read_csv("sales_data.csv")

domain.add_df(df, name="sales")

Step 4: Ask Questions

Once the agent is configured, create a thread and start asking questions:

thread = agent.thread()

# Get a text answer

thread.ask("What were total sales by region last quarter?")

print(thread.text())

# Get results as a DataFrame

df = thread.ask("Show me the top 10 customers by revenue").df()

print(df)

# Generate a chart

plot = thread.plot("Bar chart of monthly revenue for 2025")

Each thread maintains conversational context, so follow-up questions work naturally. You can ask "Now break that down by product category" and the agent understands what "that" refers to.

Step 5: Use the CLI for Quick Queries

If you prefer working from the terminal instead of writing Python scripts, the Databao CLI lets you query databases directly:

databao init

databao add-source postgres --connection-string "postgresql://user:pass@host/db"

databao ask "How many orders were placed this week?"

The CLI also supports running Databao as an MCP server, which means you can connect it to tools like Claude Desktop or any MCP-compatible client for a chat-based data analysis experience.

Step 6: Add Semantic Context (Optional)

This step is optional but becomes valuable as your queries get more complex. The Context Engine lets you define business logic so the agent understands that "active users" means users who logged in within the last 30 days, or that "revenue" always excludes refunds.

databao context init

databao context scan --source postgres

The scan command reads your database schema and generates an initial context file. You can then edit this file to add business definitions, relationships between tables, and custom metrics. Once the context is in place, the agent uses it automatically to generate more accurate queries.

Common Setup Issues

If you get import errors after installation, make sure you are using Python 3.10 or higher. Databao uses recent Python features that are not available in older versions.

If the agent generates incorrect SQL, adding semantic context (Step 6) usually fixes the problem. Without context, the agent relies solely on column names and data types, which can be ambiguous.

For Snowflake and BigQuery connections, make sure your authentication credentials are configured in your environment. Snowflake uses the standard snowflake-connector-python auth flow, and BigQuery uses Google Cloud credentials.

What Sets Databao Apart

According to JetBrains, one key differentiator is the separation of context from the agent. Most AI data tools embed their understanding of your schema directly in prompts. Databao externalizes this into a governed semantic layer that multiple agents and team members can share. In benchmarks, this approach helped it achieve the top score on Spider 2.0 DBT, outperforming tools that rely on prompt engineering alone.

The tool is fully open-source and runs locally, which matters for teams working with sensitive data that cannot be sent to third-party services. If you want to skip setup entirely and query data from a file upload with no configuration, tools like VSLZ handle that workflow from a browser with a single prompt.

Next Steps

Once you are comfortable with the basics, explore connecting multiple data sources to a single domain, sharing context files across your team through version control, and setting up the MCP server integration for conversational data analysis in your preferred chat client.

FAQ

What databases does Databao support?

Databao supports PostgreSQL, MySQL, Snowflake, BigQuery, DuckDB, SQLite, and Pandas DataFrames. Relational databases connect through SQLAlchemy engine objects, DuckDB uses its native Python connection, and Pandas DataFrames can be registered directly without any database at all.

Can I use Databao with local LLMs instead of OpenAI?

Yes. Databao works with local models through Ollama. Specify the model in the format ollama:model-name when configuring the LLM. Compatible local inference servers include Ollama, LM Studio, llama.cpp, and vLLM. No API key is needed for local models.

Is Databao free to use?

The Databao agent and context engine are both open-source and free. They are available on GitHub under the JetBrains organization. You will need your own LLM API key or a local model to power the natural language to SQL translation, but Databao itself has no licensing cost.

How does Databao compare to ChatGPT for data analysis?

ChatGPT requires you to upload data or paste schemas into the prompt each time. Databao connects directly to your live database and maintains a persistent semantic layer that captures business definitions and table relationships. This means queries are more accurate and repeatable, especially for complex multi-table joins and domain-specific terminology.

What is the Databao Context Engine?

The Context Engine is a CLI tool that scans your databases, BI tools, and documentation to generate a governed semantic layer. This layer defines business logic like what active users means or how revenue is calculated. The data agent uses this context automatically to generate more accurate SQL, reducing hallucinations and incorrect joins.