How to Set Up Pecan AI Predictive Agent

Last updated Apr 1, 2026

Pecan AI's Predictive Agent, launched January 28, 2026, lets operations managers and analysts build machine learning forecasts for churn, demand, and lifetime value without writing code or working with a data scientist. The platform converts a plain-English business question into a trained model in 15 to 60 minutes. This guide covers data requirements, the five-step setup workflow, training options, and what to expect from the output.

What the Predictive Agent Does

The Predictive Agent does not query your database and return a table of results. It coordinates multiple sub-agents that analyze the structure of your data, run data quality checks, select predictive features from up to 1,500 available signals, train and validate a machine learning model, and write scored predictions back to your data warehouse or CRM. Pecan calls this analysis of your data structure the "fingerprint" phase.

The end result is a ranked output table. For a churn model, it shows which customers are most likely to leave in the next 30, 60, or 90 days, along with a confidence score and a short list of contributing factors. For demand forecasting, it projects future volumes by product and time period. For lead scoring, it ranks open pipeline by conversion probability.

Pecan announced the Predictive Agent on January 28, 2026, and was named a 2025 Gartner Cool Vendor in data science and machine learning. Separately, it launched DemandForecast.ai as a vertical product built on top of the same agent infrastructure.

Data Requirements Before You Start

Before creating an account or connecting a data source, confirm your data meets these minimums.

Pecan requires at least 1,000 historical rows to train a model. Accuracy improves substantially with larger datasets. The platform handles messy or incomplete data automatically, including missing values and inconsistent column formatting. Personal identifiable information is not required.

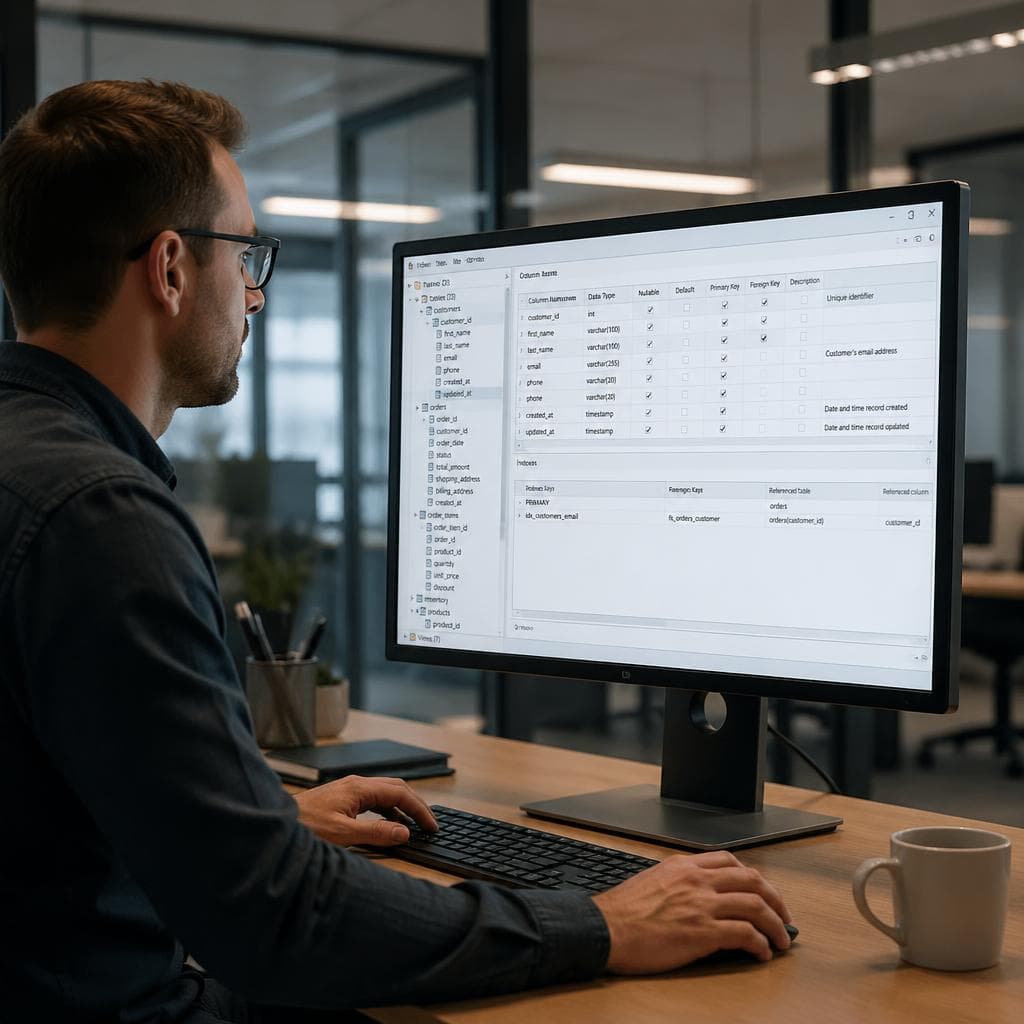

Your data must include two specific columns: an entity identifier (a customer ID, user ID, or order ID) and a date column that marks the historical moment from which the model should learn. Without a date column properly mapped, the Core Set cannot be built and training cannot proceed.

Pecan supports the following data sources as of April 2026:

Warehouses and databases: Snowflake, Google BigQuery, Amazon Redshift, Databricks, ClickHouse, PostgreSQL, MySQL, Microsoft SQL Server, Oracle, and IBM Db2.

CRMs (read and write): Salesforce and HubSpot.

File uploads: CSV directly to the platform; Parquet files via AWS S3 or Google Cloud Storage.

Alpha connectors (not recommended for production): Shopify, Intercom, and Klaviyo. These integrations are labeled alpha as of April 2026 and carry a risk of instability.

If your data currently lives in spreadsheets, export it to CSV. Pecan parses column types automatically on upload.

Step 1: Define Your Business Question

From the Pecan home screen, click "New predictive flow." A conversational interface called Predictive Chat opens and asks four sequential questions:

- What is the focus of your prediction? (Examples: sales, customer behavior, inventory)

- What specific activity are you predicting? (Examples: churn, purchase, upsell, demand)

- What is the time frame? (Next week, next month, next quarter)

- Is this a one-time or recurring prediction?

Answer each question in plain language. Pecan converts your answers into a specification for the SQL notebook it will generate in the next step. Review the generated summary and click "Looks Good" when it accurately reflects your prediction goal.

Well-documented use cases as of early 2026 include customer churn, lead scoring, demand forecasting, customer lifetime value, revenue forecasting, upsell targeting, and fraud risk prediction.

Step 2: Review the Generated Nutbook

Pecan generates a SQL notebook, called a Nutbook, based on your question. You do not write SQL; the agent writes it. The Nutbook has two components:

Core Set: Historical examples of the behavior you are predicting. For churn, these are rows identifying which customers left and when. For demand, they are historical order volumes at specific dates.

Attribute Tables: Supplementary data that provides additional signals to the model. Examples include support ticket counts, product usage frequency, account age, payment history, plan tier, and geographic data.

Run the SQL cells in sequence by clicking "Run All." Each cell depends on the output of the previous one, so order matters. Verify the final output table looks correct before marking it as the Core Set.

One step that is easy to miss: if you explored the interface using Pecan's built-in mock data, you must explicitly replace it with your real data before training. This is a separate action in the interface, and mock data cannot be used to train a live model.

Step 3: Add Attribute Tables

Once the Core Set is marked, add attribute tables. Pecan auto-generates at least one if you have a connected data source. You can add more by pointing to additional tables in your warehouse.

For a churn model, useful attributes include support interaction counts, login frequency, plan tier, contract length, and days since last activity. For a demand forecast, useful attributes include historical promotional events, regional indicators, and seasonality markers.

The platform analyzes up to 1,500 signals internally and selects the most predictive ones. Adding more data does not degrade model quality; the selection process is automated.

Step 4: Train the Model

Click "Train Model" in the top-right corner of the Nutbook interface. A column mapping confirmation screen appears. Verify that the entity identifier and date column are correctly assigned, then submit the built-in data validation check.

Training runs in two modes:

Fast mode: 15 to 30 minutes. Returns a model rated "good" accuracy. Use this for initial testing or lower-stakes planning decisions.

Production grade: Several hours. Uses advanced feature engineering and delivers a model rated "excellent" accuracy. Use this before pushing predictions to an operational system or CRM.

Pecan's published benchmarks claim over 90 percent accuracy across customer use cases, a 12 percent average reduction in churn, and models reaching production up to 32 times faster than traditional ML development. These figures come from Pecan's own marketing materials. Third-party reviewers on G2 and TrustRadius rate the platform between 7.2 and 7.9 out of 10, with some noting that output quality in edge cases lags behind more established ML platforms such as DataRobot. Customer support response time is a recurring criticism in published user reviews.

Step 5: Read and Deploy Predictions

After training completes, Pecan writes scored predictions to your chosen destination: a new table in your data warehouse, a custom field in Salesforce or HubSpot, or a file export.

For churn, the output table ranks customers from highest to lowest churn probability, alongside a confidence score and a list of the top contributing factors. For lead scoring, the table ranks open opportunities by conversion probability.

On the Starter plan, prediction batches run twice per month. On the Team plan, ten batches are available. Additional batches cost $50 each across all plans. The batch-based model means predictions are generated on a schedule, not continuously.

The output table joins to any existing customer or product table in a BI tool to build a working dashboard. A common first use is a view showing the top 20 accounts at highest churn risk, sorted by contract value. If you want to query the prediction output without setting up a BI layer, you can upload the scored CSV to a tool like VSLZ and analyze it in plain English to generate summary charts or identify patterns across segments.

Pricing and Fit

Pecan's published pricing as of April 2026:

| Plan | Monthly (annual billing) | Prediction batches | Row limit |

|---|---|---|---|

| Starter | $760 | 2 per month | 500 million rows |

| Team | $1,400 | 10 per month | 2 billion rows |

| Business | $2,000 | 60 per month | 5 billion rows |

The $760 per month minimum makes Pecan a mid-market product. It is well suited for companies that have a connected data warehouse, a well-defined recurring business question, and at least six to twelve months of transaction or user history. Companies still building their data infrastructure will spend more time on data readiness than on model configuration.

Known Limitations

The platform is batch-based, not real-time. If your use case requires live scoring as new events arrive, Pecan is not the right choice.

Users without any SQL knowledge can complete the default Nutbook workflow, but fine-tuning auto-generated queries to handle edge cases in a specific data schema requires basic SQL familiarity.

The Shopify, Intercom, and Klaviyo connectors are labeled alpha as of April 2026 and should not be used for production workloads.

Multiple user reviews across G2 and TrustRadius cite delays in customer support response times, which can slow troubleshooting during initial setup. Some reviewers also report inconsistency in prediction quality across different data types and business domains.

Datasets below 1,000 rows will not produce a trainable model. For organizations with thin historical data, building up more event history is a prerequisite before model training can begin.

FAQ

Does Pecan AI require a data scientist to use?

No. Pecan AI's Predictive Agent is designed for non-technical business users including operations managers, analysts, and founders. The platform converts a plain-English business question into SQL automatically, runs data validation, and trains a machine learning model without any coding required. Users need to understand their business question clearly and ensure their data includes an entity identifier and a date column, but no technical expertise is required beyond that.

How much data does Pecan AI need to build a prediction model?

Pecan requires a minimum of 1,000 historical rows to train a model. The platform's documentation notes that accuracy improves substantially with larger datasets. Data can be messy or incomplete; Pecan handles missing values and inconsistent formatting automatically. The data must include an entity identifier such as a customer ID and a date column that marks the historical moment the model learns from. Personal identifiable information is not required.

What data sources does Pecan AI connect to?

Pecan connects to major data warehouses including Snowflake, Google BigQuery, Amazon Redshift, Databricks, and ClickHouse, plus relational databases including PostgreSQL, MySQL, SQL Server, Oracle, and IBM Db2. CRM integrations include Salesforce and HubSpot with read and write access. CSV files can be uploaded directly, and Parquet files can be loaded via AWS S3 or Google Cloud Storage. As of April 2026, Shopify, Intercom, and Klaviyo integrations are labeled alpha and are not recommended for production workloads.

How long does Pecan AI take to train a prediction model?

Pecan offers two training modes. Fast mode completes in 15 to 30 minutes and returns a model rated good accuracy. Production grade mode takes several hours and delivers excellent accuracy through advanced feature engineering. Most users start with Fast mode for initial testing and switch to Production grade before deploying predictions to a CRM or operational data pipeline.

How much does Pecan AI cost per month?

Pecan's published pricing as of April 2026 starts at $760 per month billed annually for the Starter plan, which includes two prediction batches per month and up to 500 million rows of data storage. The Team plan is $1,400 per month with ten batches and two billion rows. The Business plan is $2,000 per month with 60 batches and five billion rows. Additional prediction batches cost $50 each across all plans. An Enterprise tier is available with custom pricing and onboarding support.